Users can now request access to the CAIDA BGP2GO (https://bgp2go.caida.org) platform. BGP2GO lets users find the MRT files that contain a specific resource and thus avoid the download and processing of unrelated data. Users can compile a customize list of relevant MRT files, share that exact list with others, or stream the matching MRT files (e.g., using BGPStream).

Finding the needle in the haystack

Public BGP route collectors receive update messages from over 1,000 BGP routers worldwide. These updates are archived and made available for download and analysis. However, the data is organized in a way that often requires downloading vast amounts of unrelated information making it increasingly difficult to focus on the needles in the haystack.

Imagine you’re a network operator announcing a new prefix and you want to analyze its propagation. You may not know exactly which collector or MRT file contains the relevant data, forcing you to download and sift through unnecessary information.

BGP2GO solves this problem by allowing users to easily select only the files they need, saving significant time and effort.

We have developed a comprehensive index of all prefixes, ASNs, and communities across RouteViews update files (https://archive.routeviews.org), along with BGP2GO, a user interface that allows you to easily select the specific files you need (https://bgp2go.caida.org)

Use Case: Analyzing Prefix Propagation with PEERING Testbed, BGP2GO, and BGPStream

Let’s walk through a real-world example. Suppose you’re a network operator advertising a prefix and want to examine how it propagates across RouteViews collectors. Using the PEERING testbed (https://peering.ee.columbia.edu/), we performed a controlled advertisement of the prefix 184.164.246.0/24 during August 2024.

Step 1: Looking up the prefix and setting filters

In this case, we search for the prefix 184.164.246.0/24 in BGP2GO, filtering for data from August 2024. The platform identifies 33,251 announcements and withdrawals, spread across 746 files (2.32GB) from 26 collectors. This curated selection lets us focus only on the data we need, saving time and resources.

https://bgp2go.caida.org/details?pre=184.164.246.0/24&years=2024&months=8

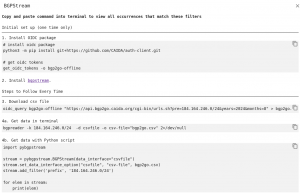

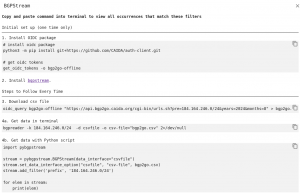

Step 2: Stream selected files in the terminal

Once the relevant MRT files are selected, you can stream them for further processing using BGPStream. Clicking the BGPSTREAM button in the top right corner, right above the “collectors” chart gives instructions on how to stream the files in your terminal.

For this example, we use the following command (see step 4a above) in the terminal to process the relevant files:

bgpreader -k 184.164.246.0/24 -d csvfile -o csv-file="bgp2go.csv"

This command leverages bgpreader to read the files and output the lines that pertain to the resource (prefix 184.164.246.0/24) . The following screenshot shows an excerpt of routes related to the prefix 184.164.246.0/24 extracted from all files that contain this prefix in August 2024.

To learn more about the bgpreader command and its options, visit the BGPStream website (https://bgpstream.caida.org/) .

Getting Access to BGP2GO

To request access to the BGP2GO platform, a user should first create an account with the CAIDA Services Single Sign On (SSO) system ( https://auth.caida.org/realms/CAIDA/account ) by providing basic information and undergoing authentication via Keycloak. After authentication, a user can request access to the BGP2Go platform (which requires a CAIDA-authorized account with bgp2go-api:read role) by going to https://bgp2go.caida.org/ and filling out the request form. If you have any questions or problems, please contact us at data-info@caida.org