Targeted Serendipity: the Search for Storage

April 4th, 2012 by Josh PolterockOn the heels of our recent press release regarding fresh publications that make use of the UCSD Network Telescope data, we would like to take a moment to thank the institutions that have helped preserve this data over the last eight years. Though we recently received an NSF award to enable near-real-time sharing of this data as well as improved classification, the award does not cover the cost to maintain this historic archive. At current UCSD rates, the 104.66 TiB would cost us approximately $40,000 per year to store. This does not take into account the metadata we have collected which adds roughly 20 TB to the original data. As a result, we had spent the last several months indexing this data in preparation for deleting it forever.

Then, last month, I had the opportunity to attend the Security at the Cyberborder Workshop in Indianapolis. This workshop focused on how the NSF-funded IRNC networks might (1) capture and articulate technical and policy cybersecurity considerations related to international research network connections, and (2) capture opportunities and challenges for the those connections to foster cybersecurity research. I did not expect to find a new benefactor for storage of our telescope data at the workshop though, in fact, I did.

During the workshop, I mentioned to the group that we were preparing to purge historic darknet data for lack of funds to pay for storage. Upon hearing of our plans to delete the data, a NERSC System Administrator offered to store the data in NERSC’s tape archive. He understood the relevance of the data to cybersecurity research and the value of longitudinal analysis on this fairly rare and unique data type. In less than a month, we had accounts and began the work of moving the data.

CAIDA would like to thank the San Diego Supercomputer Center for archiving the UCSD Network Telescope data since 2003. The IBM HPSS and more recently Sun SamQFS archival storage systems dutifully preserved and delivered the 100+ Terabytes of raw pcap traces we have archived over the last eight years.

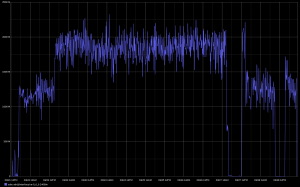

We would also like to thank the National Energy Research Scientific Computing Center (NERSC) and ESnet for the resources that allowed us to continue to preserve this data. On 22 March 2012, we started the transfer via ESnet shown in-flight in Figure 1 to the NERSC HPSS facilities. The transfer completed in roughly one week’s time and sustained an average of 1.52 Gbps limited by local host disk I/O.

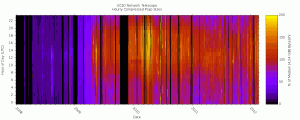

Figure 2 below presents an interesting heat map visualization of the data collection volume. Each vertical bar represents one day of data, while the horizontal bands represent the size of a compressed file (in pcap format) of captured traffic for an hour of the day. We color each data point based on its deviation from the median hourly captured traffic file size. Specifically, an hour equal to the median file size we color red. Hours with (compressed) traffic volumes at twice (or more) of the median we color yellow, and hours with no data appear black. So, hotter colors mean more data. Data collection on the telescope is a best-effort service — outages show up as vertical black bars. This plot also reveals an increase in the amount of data stored after April 2009 due to the removal of an upstream rate limit filter on incoming packets (We removed that filter in the wake of the advent of the Conficker worm, in order to study it.) The color changes in the heat map also show the diurnal variation in traffic volume, although since this type of traffic originates from most time zones of the world, the “busy hour” is not sharply delineated.